Calibrating AI Planning Scores for True Potential in Capevents

In the capevents project, which focuses on event management and planning, artificial intelligence plays a crucial role in optimizing various aspects, from resource allocation to scheduling. Initially, our AI models might generate 'raw' planning scores, indicating the predicted effectiveness or quality of a particular plan.

The Challenge with Absolute Scores

Absolute scores, while seemingly straightforward, can often be misleading without proper context. A score of '85' might sound good, but what does it really mean in the grand scheme of all possible plans? Is '85' excellent, or merely average given the specific constraints and opportunities? Without a clear reference point, interpreting these scores becomes subjective and can lead to suboptimal decision-making.

Consider an analogy: a student scores 85 on a test. Is that good? It depends. If the highest possible score was 90, it's excellent. If the highest was 200, it's poor. The absolute score alone doesn't tell the full story; we need context.

Embracing Relative AI Potential Through Calibration

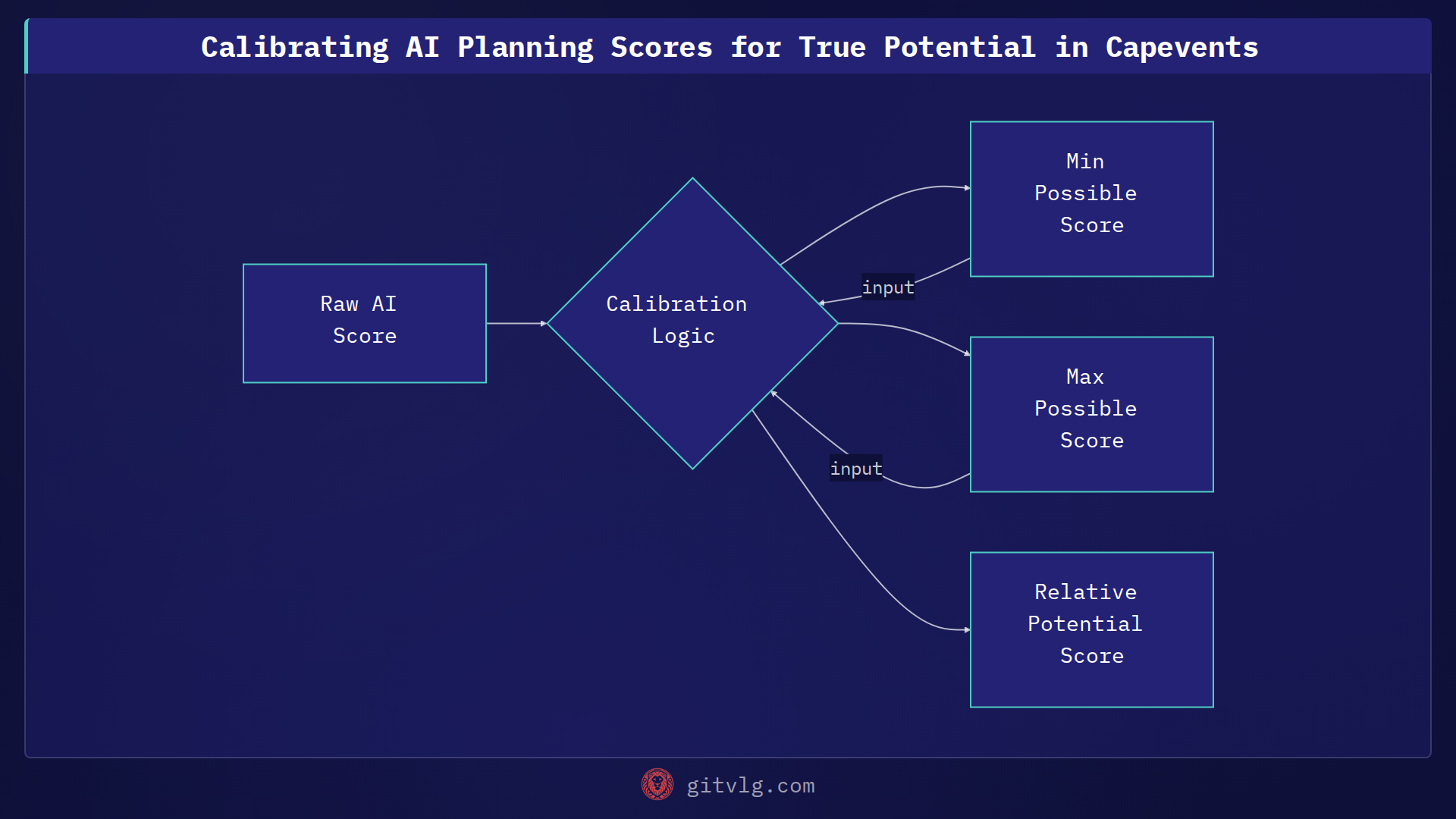

To address this, we implemented a calibration mechanism to transform these absolute planning scores into 'relative AI potential'. This approach provides a normalized perspective, indicating how well a plan performs compared to the best possible outcome or a defined maximum potential within the given context. It shifts the focus from a raw number to a more insightful measure of actualized potential.

This calibration ensures that planners can quickly grasp the true value of a proposed plan, understanding its standing relative to what the AI considers optimal. For instance, a plan achieving 95% of its 'relative AI potential' is clearly superior to one achieving only 60%, regardless of their raw scores.

Here's a simplified Python example demonstrating how a raw score might be calibrated to a relative potential percentage:

def calibrate_planning_score(raw_score: float, min_possible: float, max_possible: float) -> float:

"""

Calibrates a raw AI planning score to a relative potential percentage.

Scores are normalized between 0 and 100 based on min/max possible values.

"""

if max_possible <= min_possible:

raise ValueError("Max possible score must be greater than min possible score.")

# Normalize the score to a 0-1 range

normalized_score = (raw_score - min_possible) / (max_possible - min_possible)

# Clamp the score between 0 and 1 (in case raw_score is out of bounds)

clamped_score = max(0.0, min(1.0, normalized_score))

# Convert to percentage

relative_potential = clamped_score * 100

return relative_potential

# Example Usage:

raw_plan_score = 75.0

min_ai_score_expected = 50.0

max_ai_score_expected = 100.0

calibrated_score = calibrate_planning_score(raw_plan_score, min_ai_score_expected, max_ai_score_expected)

print(f"Raw Score: {raw_plan_score}, Relative Potential: {calibrated_score:.2f}%")

raw_another_score = 40.0 # Below min_ai_score_expected

calibrated_another = calibrate_planning_score(raw_another_score, min_ai_score_expected, max_ai_score_expected)

print(f"Raw Score: {raw_another_score}, Relative Potential: {calibrated_another:.2f}%")

This calibrate_planning_score function takes a raw score, along with the minimum and maximum possible scores the AI system can produce for a given planning scenario. It then normalizes the raw score to a 0-100% range, effectively expressing it as a percentage of its potential. This provides a much clearer signal for decision-makers than an absolute value alone.

The Impact

By calibrating planning scores to reflect relative AI potential, capevents ensures that our AI recommendations are not just numerically high or low, but meaningfully contextualized. This leads to more informed decisions, improved plan quality, and ultimately, more successful events. It transforms raw data into actionable intelligence, allowing us to truly leverage the full power of our AI.

Actionable Takeaway

When working with AI-generated scores or predictions, always consider the context. Implement a calibration layer to transform absolute values into relative measures of potential or performance. This will provide clearer, more actionable insights and enhance the interpretability and utility of your AI's output.

Generated with Gitvlg.com